The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

15.8. Bidirectional Encoder Representations from Transformers (BERT) — Dive into Deep Learning 1.0.0-beta0 documentation

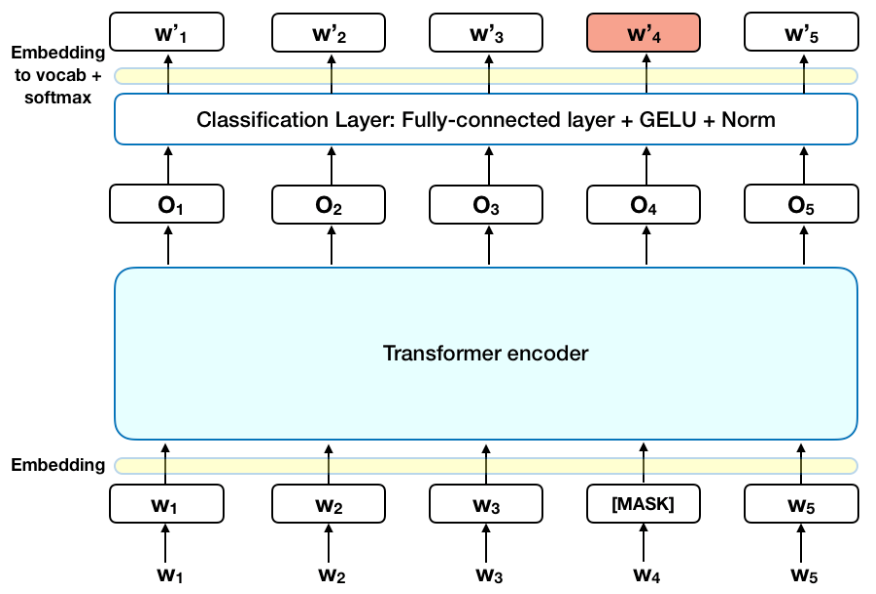

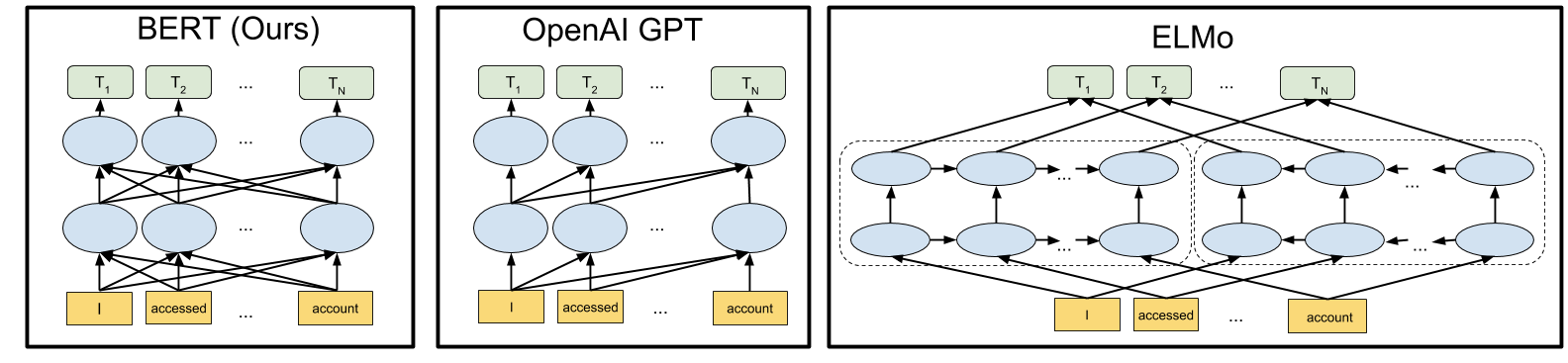

Differences between BERT, GPT, and ELMo. BERT uses a bi-directional... | Download Scientific Diagram

Can GPT-3 or BERT Ever Understand Language?—The Limits of Deep Learning Language Models - neptune.ai

Sesame Street Characters Count Elmo Bert Ernie Grouch and the Gang! Edible Cake Topper Image ABPID52260 - Walmart.com

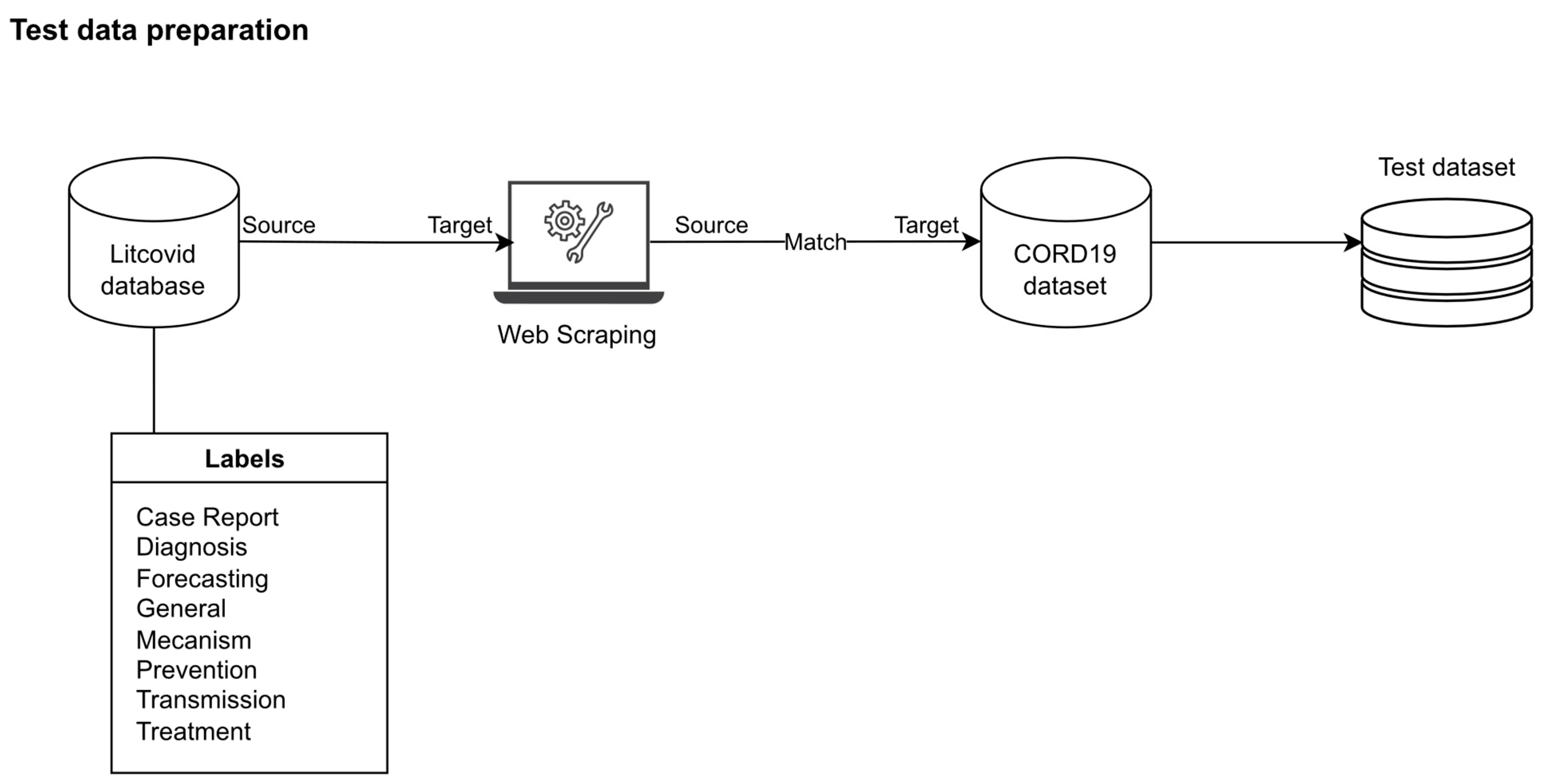

MAKE | Free Full-Text | Do We Need a Specific Corpus and Multiple High- Performance GPUs for Training the BERT Model? An Experiment on COVID-19 Dataset

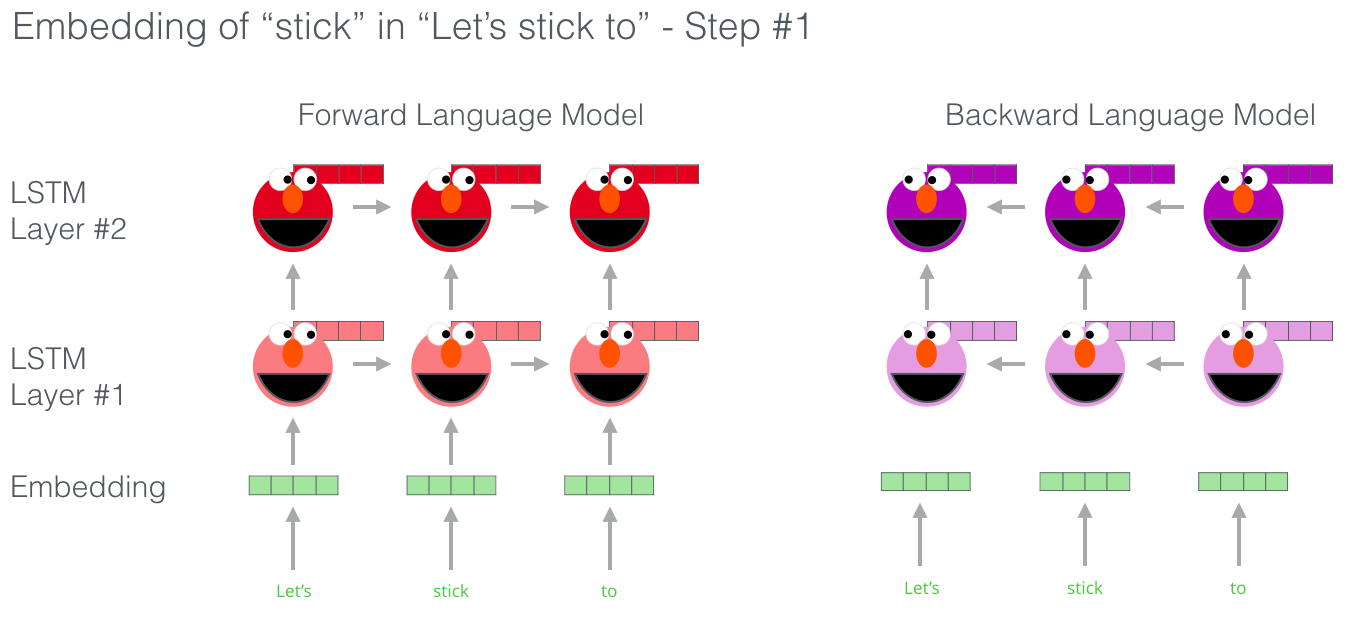

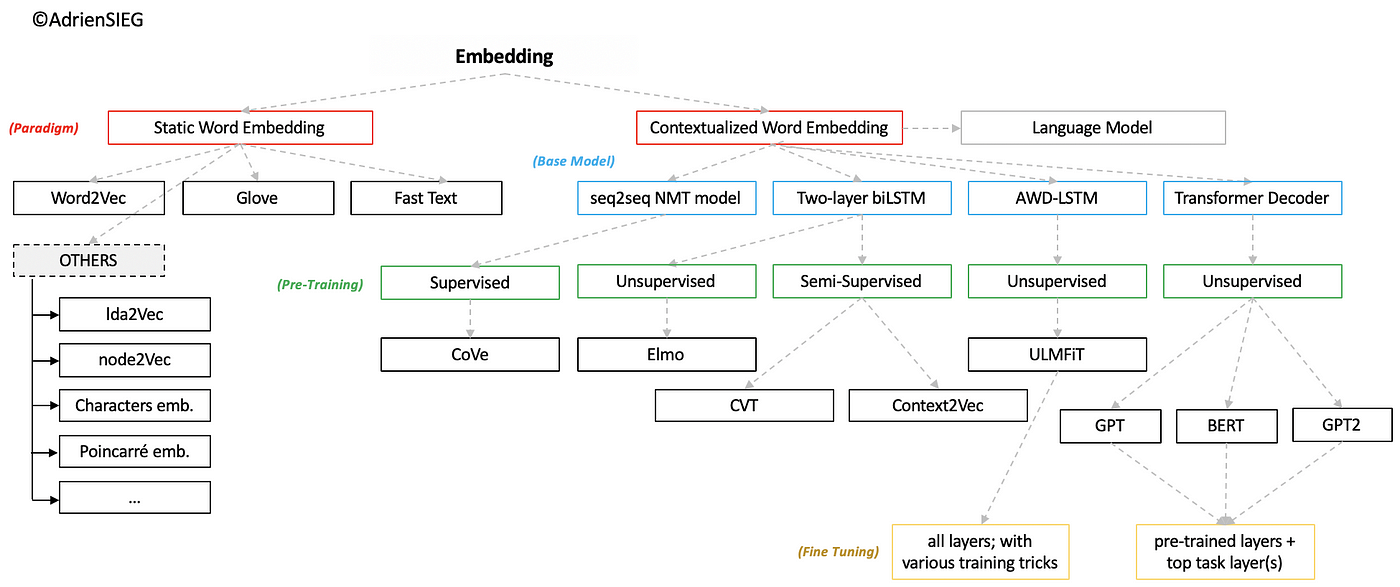

FROM Pre-trained Word Embeddings TO Pre-trained Language Models — Focus on BERT | by Adrien Sieg | Towards Data Science

![PDF] CharacterBERT: Reconciling ELMo and BERT for Word-Level Open-Vocabulary Representations From Characters | Semantic Scholar PDF] CharacterBERT: Reconciling ELMo and BERT for Word-Level Open-Vocabulary Representations From Characters | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/473921de1b52f98f34f37afd507e57366ff7d1ca/3-Figure2-1.png)

PDF] CharacterBERT: Reconciling ELMo and BERT for Word-Level Open-Vocabulary Representations From Characters | Semantic Scholar

![Comparison of BERT, OpenAI GPT and ELMo model architectures [5]. | Download Scientific Diagram Comparison of BERT, OpenAI GPT and ELMo model architectures [5]. | Download Scientific Diagram](https://www.researchgate.net/publication/338931711/figure/fig1/AS:999328713822208@1615269940697/Comparison-of-BERT-OpenAI-GPT-and-ELMo-model-architectures-5.png)